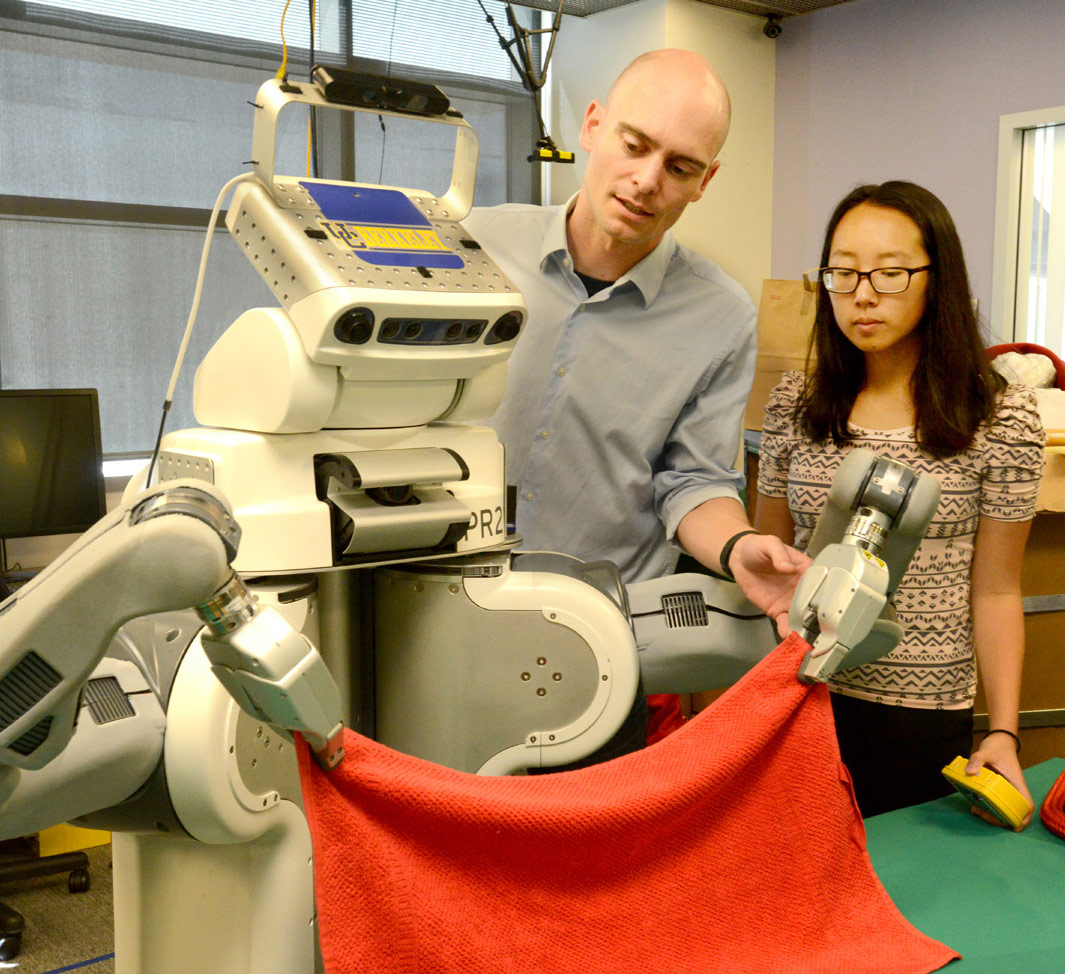

Watson is more capable and human-like than ever before, especially when injected into a robot body. We got to see this first-hand at NVIDIA’s GPU Technology Conference (GTC) when Rob High, an IBM fellow, vice president, and chief technology officer for Watson, introduced attendees to a robot powered by Watson. During the demonstration, we saw Watson in robot form respond to queries just like a human would, using not only speech but movement as well. When Watson’s dancing skills were called into question, the robot responded by showing off its Gangnam Style moves.

This is the next level of cognitive computing that’s beginning to take shape now, both in terms of what Watson can do when given the proper form, and what it can sense. Just like a real person, the underlying AI can get a read on people through movement and cognitive analysis of their speech. It can determine mood, tone, inflection, and so forth.

Source: IBM’s Watson Cognitive AI Platform Evolves, Senses Feelings And Dances Gangnam Style