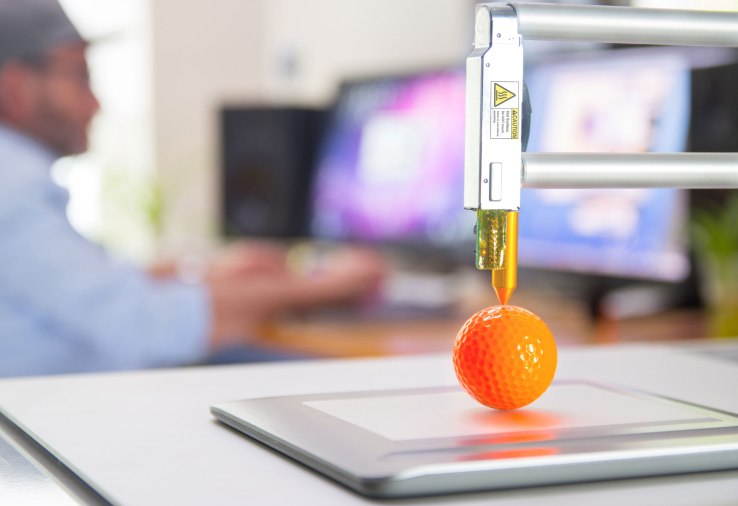

“AI today is able to diagnose your personality and emotional state by looking at your face and recognizing tiny muscle movements. It can tell whether you are tired, excited, angry, joyful, in love … it can tell these things even though AI itself doesn’t feel anger or love.

”In the future, therefore, AI could “drive humans out of the job market and make many humans completely useless, from an economic perspective” in areas where human interaction was previously considered crucial, Harari said.

“In customer services departments they have started using AI to assess the emotions of people who are calling,” he said. “AI analyzes the tone of your voice and choice of words … and recognizes both your personality type and also your immediate emotional condition.”